Changelog

Trigger.dev is now HIPAA ready

Trigger.dev Cloud now meets HIPAA Business Associate requirements. Sign a BAA and run workloads that process Protected Health Information on our managed infrastructure.

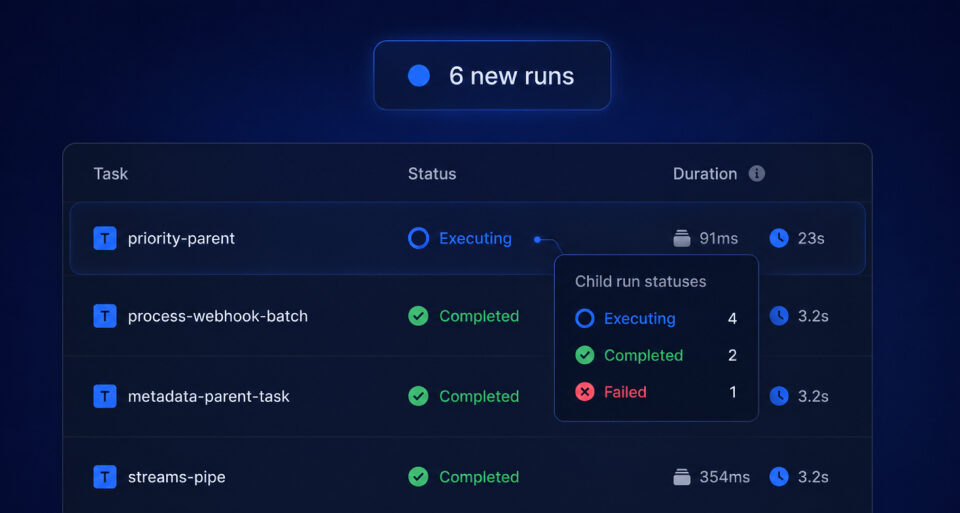

Runs page updates Live

Runs page now updates in place: statuses change live, a banner flags new runs, and parent tooltips show child-run breakdowns.

Katia Bulatova

Trigger.dev v4.4.6

Faster failure on uncaught exceptions and a fix for dev workers spinning at 100% CPU.

Trigger.dev v4.4.5

Run replay detection with ctx.run.isReplay, a --no-browser CLI flag for headless environments, and a 24-hour grace window for API key rotations.

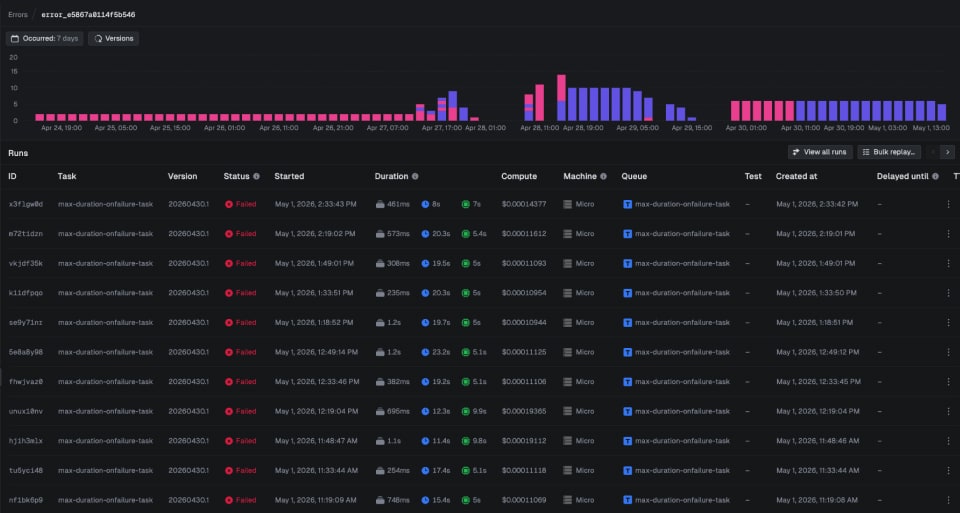

Errors: find and fix your bugs faster

Quickly track down what’s causing your runs to fail with error alerts. Then bulk replay when you’ve shipped the fix.

AWS PrivateLink: connect your AWS resources without exposing them

Reach private databases, caches, and APIs from your tasks over AWS PrivateLink. No public endpoints, no IP allowlists, no VPN. Set it up manually, with AI, or with a generated Terraform script.

Input streams: send data into running tasks

Bidirectional task communication. Send typed data into running tasks from your backend or frontend, with four receiving patterns from suspending (frees compute) to non-blocking.

Vercel integration

Connect your Vercel project to Trigger.dev and never run a manual deploy command again. Automatic deploys, env var sync, and atomic deployments

Trigger.dev v4.4.3

Error tracking dashboard, Supabase environment variable sync, and dev run auto-cancel on CLI exit.

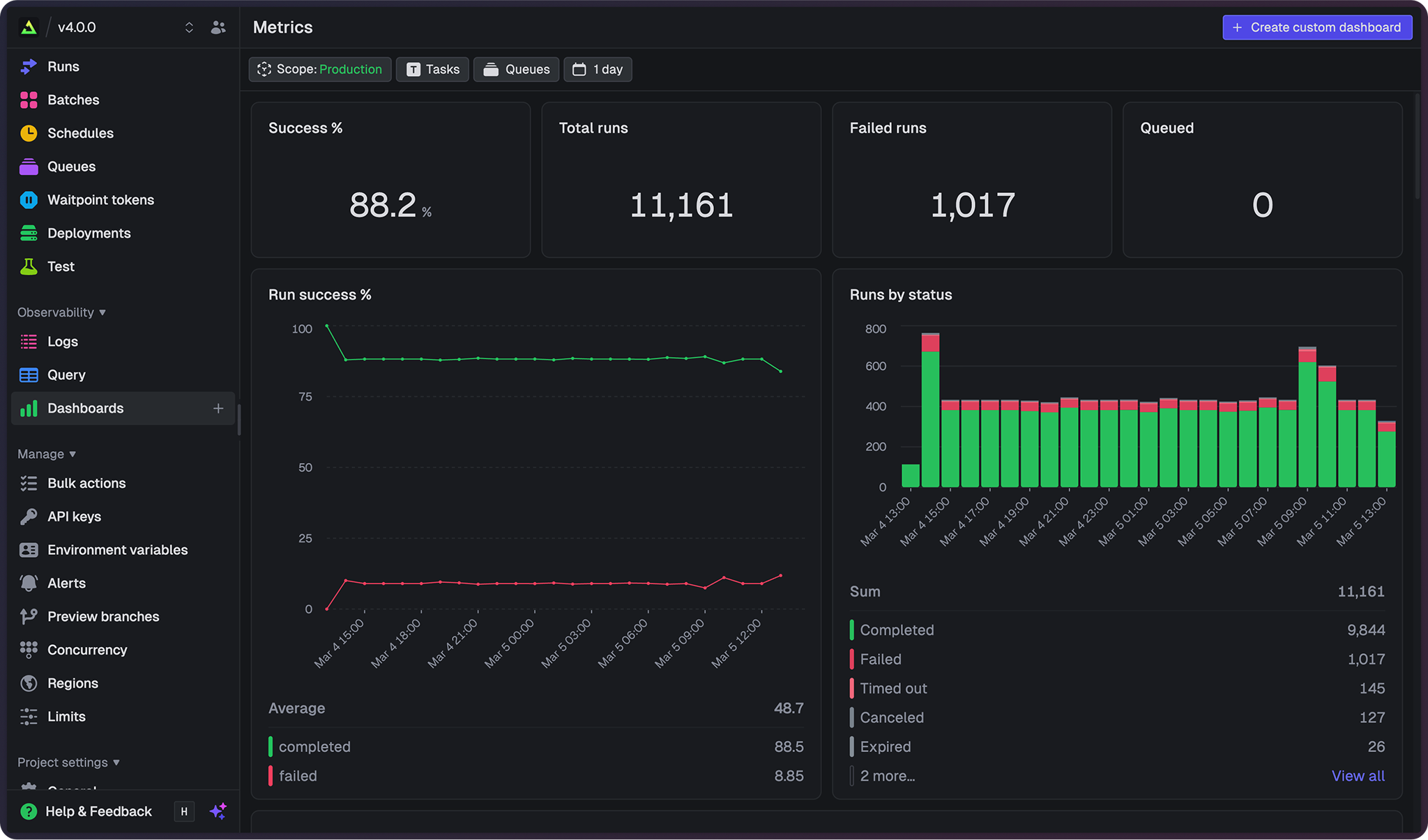

Query & Dashboards: analytics for your Trigger.dev data

Analyze your data using SQL for one-off queries or build custom dashboards with charts and tables.

Trigger.dev v4.4.2

Input streams for bidirectional task communication, batch queue performance improvements, and security hardening.

Trigger.dev v4.4.0

Metrics dashboards and query engine, Vercel integration, debounce maxDelay, and more.

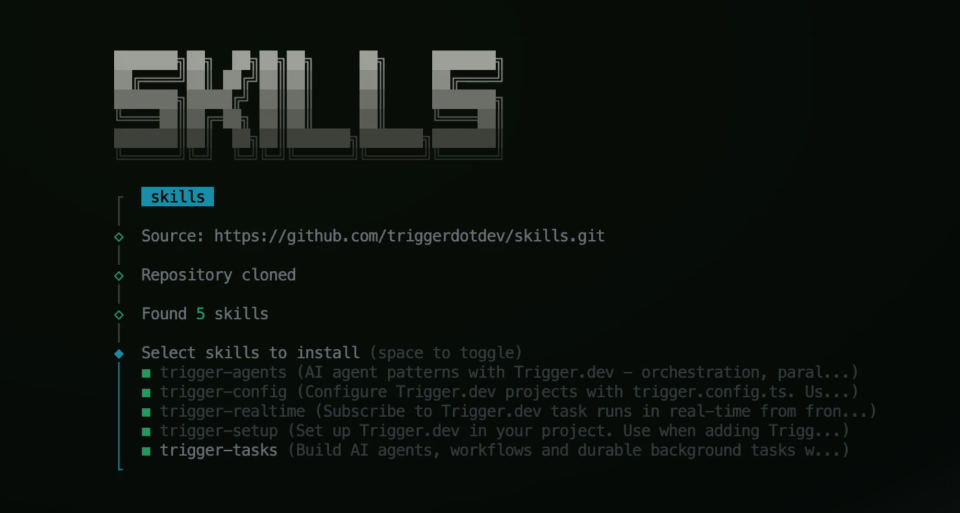

Trigger.dev agent skills for AI coding assistants

Install our official agent skills to teach any AI coding assistant best practices for writing tasks, agents, and workflows.

Trigger.dev v4.3.3

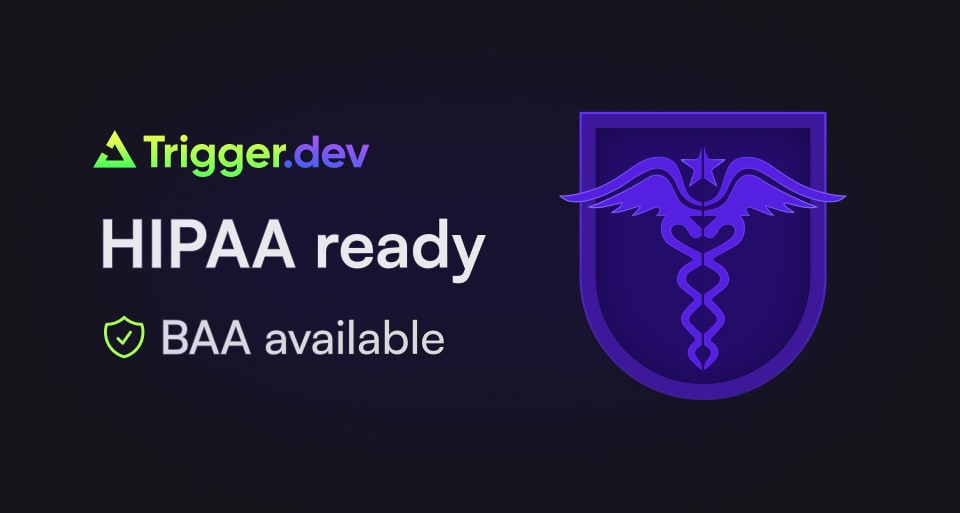

AI SDK 6 support, new limits page, better date and time filtering, improvements, bug fixes and server changes.

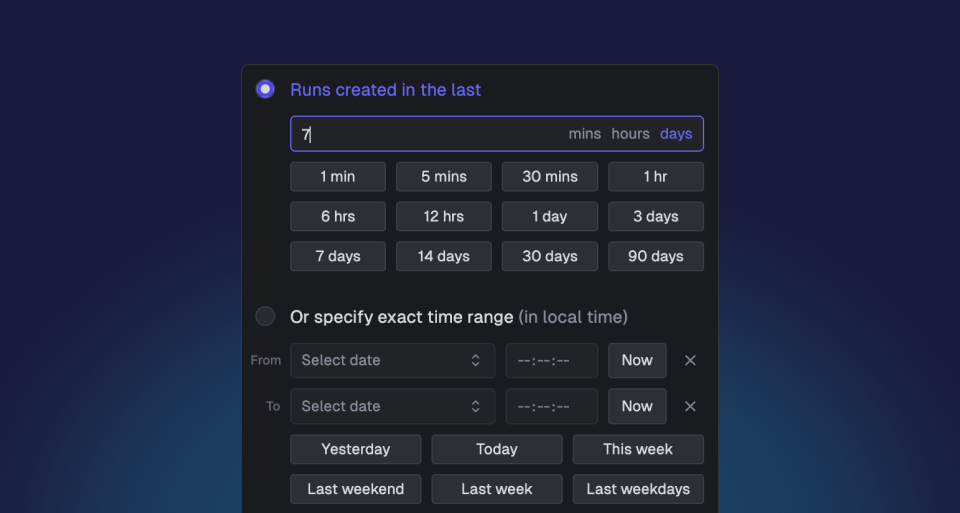

Improved date and time filtering in the dashboard

Redesigned date picker with visual calendar navigation, time precision controls, and quick preset options for faster run filtering.

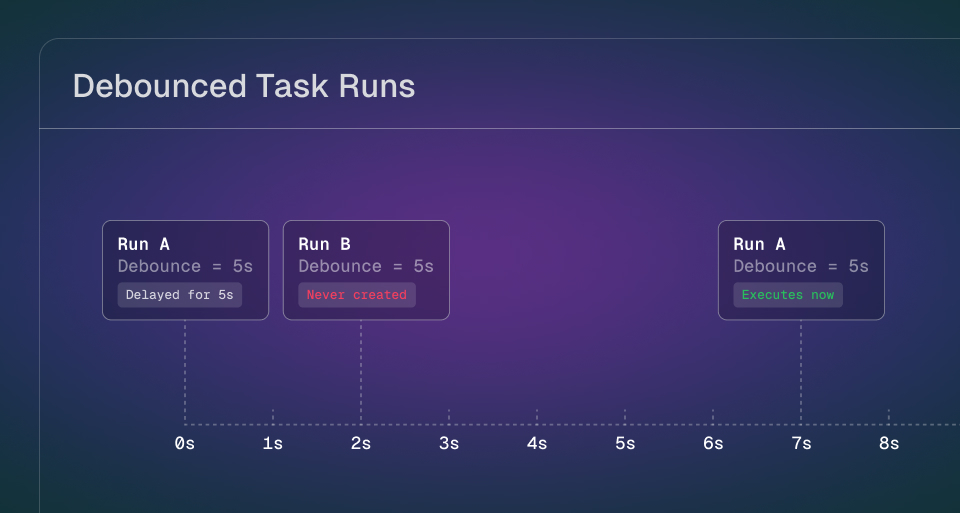

Debounced task runs

Consolidate multiple triggers into a single execution by debouncing task runs with a unique key and delay window.